Will Autonomous Security Kill CVEs?

How many potholes did you encounter on your way into work today? How many of them did you report to the city?

Vulnerability reporting works much the same way. Developers find bugs – and vulnerabilities – and don’t always report them. Unfortunately, the manual process to diagnose and report each one is a deterrent. That manual process is holding automated tools back. So, will autonomous security kill CVEs?

Software is Assembled

Software is assembled from pieces, not written from scratch. When your organization builds and deploys an app, you're also inheriting the risk from each and every one of those code components. A 2019 Synopsys reports 96% of code bases [caution: email wall] they scanned included open source software and up to 60% contain a known vulnerability.

The risks don’t stop there. Open source and third-party components are heavily used when you operate software. For example, 44% of indexed sites use the Apache open source web-server -- meaning a single exploitable vulnerability in the Apache web server would have serious consequences for all of those sites.

So, how do you determine if you’re using a known vulnerable building block? You consult a database. These databases may assume different names, but at the root of many of them is the MITRE CVE database.

Entire industries have been built on the ability to reference databases to identify known vulnerabilities in software. For example:

- Software Component Analysis tools (e.g., BlackDuck, WhiteSource)allow developers to check build dependencies for known vulnerabilities.

- Container Scanners (e.g., TwistLock, Anchore) check built docker image for out-of-date, vulnerable libraries.

- Network Scanners (e.g., Nessus, Metasploit) check deployed infrastructure for known vulnerabilities.

But, here is the key question: where do these databases get their information?

Where Vulnerability Information Comes From...

Today, most vulnerability databases are created and maintained through huge amounts of manual effort. MITRE’s CVE database is the de facto standard, but it is populated by committed men and women who research bugs, determine their severity, and follow the manually reporting guidelines for the public good.

If there’s one thing we know, human processes don’t scale well. The cracks are beginning to show.

Here’s the problem: automated tools like fuzzing are getting better and better at finding new bugs and vulnerabilities. These types of automated vulnerability discovery tools don’t work well with current manual process to triage and index vulnerabilities.

Google’s Automated Fuzzing

Consider this: automated fuzzing farms can autonomously uncover hundreds of new vulnerabilities each year. Let’s look at Google ClusterFuzz, since their statistics are public.

In Chrome:

- 20,442 bugs were automatically discovered by fuzzing.

- 3,849 – 18.8% of them – are labeled as a security vulnerability.

- 22.4% of all vulnerabilities were found by fuzzing (3,849 security vulnerabilities found by fuzzing divided by 17,161, the total number of security-critical vulnerabilities found).

Google also runs OSS-Fuzz, where they use their tools on open source projects. So far, OSS-Fuzz has found over 16,000 defects, with 3,345 of them labeled as security related (20%!).

Many of the security-critical bugs are never reported or given a CVE number. Why? Because it’s labor intensive to file a CVE and update the database. Despite the fact that fuzzers autonomously generates inputs that triggers bugs, shedding light on where to locate the bug and how to trigger it, researchers still opt not to report their findings. ok, In fact, I wouldn’t be surprised if you could make a few thousand bucks a year taking Google’s OSS feed, reproducing the results, and claiming a bug bounty.

So far, we've learned:

- There are tools that can find thousands of defects a year.

- Many are security-critical. In the two examples above, we learned that approximately 20% of findings are labeled as security issues -- meaning hundreds of new vulnerabilities are being discovered a year.

- There is no way to automatically report and index these bugs.

- Yet we depend on indexes, like the MITRE CVE database, to tell us whether we’re running known vulnerable software.

Earlier this year Alex Gaynor raised the issue of fuzzing and CVEs, with a nice summary of responses created by Jake Edge. While there wasn’t a consensus on what to do, I believe Alex is pointing out an important issue and we shouldn't dismiss it. Awareness and acceptance are the first steps to a finding a solution.

We’ve Evolved Before...

How we index known vulnerabilities has evolved over time. I think we can change again.

In the early 1990s, if you wanted to track responsibly disclosed vulnerabilities, you’d coordinate with CERT/CC or a similar organization. If you wanted the firehouse of new disclosures, you’d subscribe to a mailing list like bugtraq on security focus. Over time, vendors recognized the importance of cybersecurity and created their own database of vulnerabilities. It evolved to a place where system administrators and cybersecurity professionals had to monitor several different lists, which didn’t scale well.

By 1999, the disjoint efforts were bursting at the seams. Different organizations would use different naming conventions and assign different identifiers to the same vulnerability. It started to become difficult to answer whether vendor A’s vulnerability was the same as vendor B’s. You couldn’t answer the question “how many new vulnerabilities are there each year?”

In 1999, MITRE had an “aha” moment and came up with the idea of a CVE List (common vulnerability enumeration). A CVE (Common Vulnerabilities and Exposures) is intended to be a unique identifier for each known vulnerability. To quote MITRE, a CVE is:

- The de facto standard for uniquely identifying vulnerabilities.

- A dictionary of publicly known cybersecurity vulnerabilities.

- A pivot point between vulnerability scanners, vendor patch information, patch managers, and network/cyber operations.

MITRE’s CVE list has indeed become the standard. Companies rely on CVE information to decide how quickly they need to roll out a fix or patch. MITRE has also developed a vocabulary for describing vulnerabilities called the “Common Weakness Enumeration” (CWE). We needed both, and they served a great solution for the intended purpose: make sure everyone is speaking the same language.

CVEs can help executives and professionals alike better identify and fix known vulnerabilities quickly. For example, consider Equifax. One reason Equifax was compromised was because they had deployed a known vulnerable version of Apache Struts. That vulnerability was listed 9 weeks prior in the CVE database. If Equifax had consulted the CVE database, they would have discovered they were vulnerable a full 9 weeks before the attack.

Cracks are Widening in the CVE System

The CVE system is OK but doesn’t scale to automated tools like fuzzing. These tools can identify new flaws at a dramatically new speed and scale. That’s a not hyperbole: remember Google OSS-Fuzz – just one company running a fuzzer – identified over 3000 new security bugs over 3 years.

But, many of those flaws are never reported to a CVE database. Instead, companies like Google focus on fixing the vulnerabilities, not reporting them. If you’re a mature DevOps team, that’s great; you just pull the latest update on your next deploy. However, very few organizations are have mature DevOps processes, where they can upgrade all software they depend on overnight.

I believe we’re hitting an inflection point where real-life, known vulnerabilities are becoming invisible to automated scanning. In the beginning, we mentioned entire industries exist just to scan for known vulnerabilities at all stages of the software lifecycle: development, deployment, and operations.

Research Hasn’t Quite Caught Up With The Problem, But It Needs To. There are Several Challenges...

Companies want to find vulnerabilities, but also are often incentivized to downplay any potential vulnerability that isn’t well-known or super critical. Companies want to understand the severity of an issue, but judging severity is often context-dependent.

First, the word “vulnerability” is really squishy, and severity is dependent on reachability and exploitability. The same program containing a vulnerabilities can be on the attack surface for some software, but not for others. For example, ghostscript is a program for interpreting postscript and PDF files. It may not seem on the attack surface, but it’s used in mediawiki (the wiki that powers wikipedia) to potentially process malicious user input. How would you rate the severity of a ghostscript vulnerability in a way meaningful to everyone?

Second, even the actual specifications of a vulnerability are squishy. A MITRE CVE contains very little structured information. There isn’t any machine-specified way to determine if a bug found qualifies for a CVE. It’s really up to the developer, which is appropriate when developers are actively engaged and can investigate the full consequences of every bug. It’s not great otherwise.

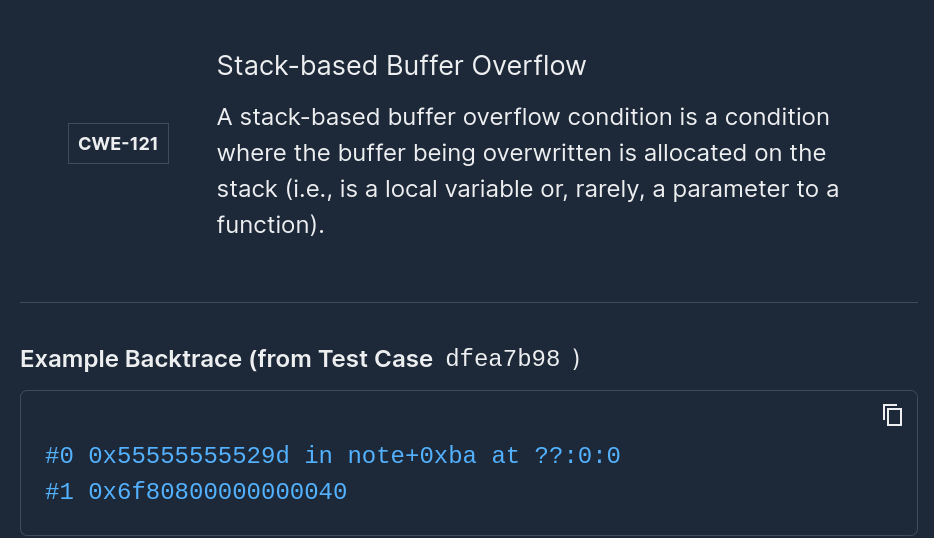

Third, the naming for various types of vulnerabilities – or in MITRE-speak, “weaknesses,” is squishy. CWE’s were intended to become the de-facto standard for how we describe a vulnerability, just like CVE’s are for listing specific flaws. Automated tools today can find buffer overflows and demonstrate them, correctly labeling them with CWE types for input validation bug, a buffer overflow, or out-of-bounds write, each of which can be argued to be technically correct.

Overall, I believe we need to rethink CVE’s and CWE’s so that automated tools can correctly assign a label and calculate a severity. Developers don’t have time to investigate every bug and their potential security consequences. They’re focused on utilizing their creative to write code and, when the occasion arises, fixing the bug before them; not making sure anyone using the software has the latest copy.

We also need a machine-checkable way of labeling the type of bug, replacing the informal CWE definition. Today CWEs are designed for humans to read, but that’s too underspecified for a machine to understand. Without this, it’s going to be hard for autonomous systems to go the extra mile and hook up to a public reporting system.

Finally, we need to think about how we prove whether a vulnerability is exploitable. In 2011, we started doing research into automated exploit generation with the goal to show whether a bug could result in control flow hijack. [We turned off OS-level defenses that might mitigate an attacker from exploiting a vulnerability, such as ASLR. The intuition is that the exploitability of an application should be considered separately from whether a mitigation makes exploitation harder.] In the 2016 DARPA Cyber Grand Challenge, all competitors needed to create a “Proof of Vulnerability,” such as showing you could control a certain number of bits of execution control flow. Make no mistake: this is early work and there is a lot more to be done to automatically create exploits, but it was a first step.

However, one question to consider is whether “Proofs of Vulnerabilities” are for the public good. The problem: just because you can’t automatically prove a bug is exploitable (or even manually) doesn’t mean the bug isn’t security critical.

For example, in one of our research papers we found over 11,000 memory-safety bug in Linux utilities, and we were able to create a control flow hijack for 250 – about 2%. That doesn’t mean the other 98% are unexploitable. It doesn’t work that way. Automated exploit generation confirms a bug is exploitable, but it doesn’t reject a bug as unexploitable. It also doesn’t mean that the 250 discovered were exploitable in your particular configuration.

We saw similar results in the Cyber Grand Challenge. Mayhem could often find crashes for really exploitable bugs, but Mayhem wasn’t able to create an exploit. The same was reported by other teams. Thus, just because an automated tool can’t prove exploitability doesn’t mean the bug isn’t security critical.

One Proposal

I believe we need to set up a machine checkable standard for when a bug is likely to be of security interest. For example, Microsoft has a “!exploitable” plugin for their debugger, and there is a similar version for GDB. These tools are heuristics: they can have false positives and false negatives.

We should create a list – similar to CVEs – where fuzzers can submit their crashes, and each crash is labeled as likely exploitable or not. This may be a noisy feed, but the goal isn’t for human consumption – it’s to give a unique identifier. Those unique identifiers can be useful to autonomous systems that want to make decisions. It can also help us identify the trustworthiness of software. If a piece of software has 10 bugs that have reasonable indications that they are real vulnerabilities, but no one has proved it, would you still want to field it?

I don’t think the answer is to bury them, but to index them.

I also don’t think I’m alone. Google, Microsoft, and others are prioritizing their developer workflow more and more on autonomous systems. It makes sense to make that information available to everyone who fields the software as well.

I started this article asking the question on whether autonomy will be the death of CVEs. I don’t think so. But, I do think autonomous systems will need a separate data source – something updated much faster and designed for machines, than a manually curated list – to be effective.

Key Takeaways

We covered a lot in this post, so here's a quick recap on the key takeaways:

- Organizations should continue to use scanners for known vulnerabilities with the understanding that they don’t represent the complete picture.

- The appsec community may want to consider how to better incorporate tools, like fuzzers, into the workflow. We're potentially missing out on a huge number of critical bugs and security issues.

- One proposal:

- Add structure to CVE and CWE databases that is machine parsable and usable.

- Create a system where autonomous systems can not only report problems, but also consume the information. This isn’t the same fidelity as human-verified “this is how you would exploit it in practice”, but it would help us move faster.

Agree or disagree? Let me know.

Originally published at CSOOnline.

Add Mayhem to Your DevSecOps for Free.

Get a full-featured 30 day free trial.

.jpg)