The Hacker Mind Podcast: Hacking With Light And Sound

If you think hacking only involves the use of a keyboard, then you’re probably missing out. What about using light? What about using sound?

In this episode, The Hacker Mind looks at some of the work Dr. Kevin Fu has been doing at the University of Michigan, such as using laser pointers to pwn voice-activated digital assistants, and using specific frequencies of sound to corrupt or crash hard disk drives.

Listen to EP 06: Hacking With Light And Sound on:

Vamosi: In the fall of 2019, viewers of Good Morning America awoke to hear the following:

Good Morning America: [Alexa, dim the lights] There’s a new warning this morning for everyone using Alexa, Siri, Google Home, or any of those wildly popular voice-controlled digital assistants. Researchers claim they’ve found a flaw that allows hackers to access your device from hundreds of feet away, giving them the ability to unlock your front door, even start your car. [It’s 9:43]

Vamosi: Sounds crazy, but it was true. The flaw uses light- say from a laser pointer pen -- to simulate speech. But this is just the tip of the iceberg. Everyday we are surrounded by tiny sensors that we more or less take them for granted. From the tiny microphones on our mobile devices and digital assistant, to the light detecting radars in our cars--these are single-purpose semiconductors, right? Maybe not.

There’s a group of bleeding-edge hackers who are looking at secondary channels of attack -- such as using pulsing light to imitate voice commands or specific frequencies of sound to simulate specific motion-related vibrations. And soon it’s possible these new hackers may be able demonstrate how these and other basic laws of physics can be used to take control of our digital assistants, our medical devices, even our cars.

Welcome to The Hacker Mind, an original podcast from ForAllSecure. It's about challenging our expectations about the people who hack for a living. I’m Robert Vamosi and in this episode I’m discussing the weird science of how the physics of light and sound --not keyboards or code -- can be used to compromise electronic devices and the consequences of that in the real world.

Say, you’re sitting at home one night and all of a sudden the lights dim, your garage door opens, and loud music starts playing -- music you don’t really like. You think maybe it’s the device malfunctioning but really it’s the kids across the street playing with a laser pointer pen.

Fu: That's one of the consequences. That's right, it's really general purpose. You shine a laser and you pulse it on and off at a particular rate, and it causes the microphone to hear clear intelligible speech that the adversary controls.

Vamosi: That’s Dr. Kevin Fu, one of the discoverers of this phenomenon. He’s a researcher at the University of Michigan and has been pioneering a new category of acoustic interference attacks.

Fu: So there are all sorts of ways to turning sensors into unintentional demodulators which is fancy speak for like, you know, the kid who can hear radio stations with their braces by accident. You can almost think of it like an acoustic sleight of hand. This is all about trying to prevent these secondary channels of an adversary injecting false information into sensor.

Vamosi: In this case the sensor is a tiny microphone which consists of a thin membrane. As sound hits the membrane, it vibrates, like our ear drums. Except instead of signals to our brains, the membrane triggers an electrical response. How much or how little charge it sends depends on the sound. So a microphone is looking for sound, which is a wave, But what if we have light, which in the realm of physics, can also be a wave. Light isn’t one of the intended inputs for a microphone, but it just so happens to work.

Fu: First of all, there are intentional receivers. And then there are unintentional receivers. So if you're talking about intentional receivers like hey I have a light sensor. Would you please tell me how bright the room is. That's one thing but with our laser work. The laser work was about a microphone and a microphone is not designed to receive light. But it turns out it does. And so most of our work is about sensors that don't advertise being able to sense, other modalities like sound or light, but do.

Vamosi: So you’re probably thinking that must be hard, training the laser to emulate human speech. But really it’s not.

Fu: It's not even that hard. In fact, it's so easy we demonstrated this at a elementary school museum where the museum shows kids how to use lasers to inject voices. I show six year old children how to put their voice in here with the laser beam it's it's really not that hard. Once you understand the physics. And it's effectively creating an optical am radio station. That's effectively what we're doing, we're modifying the amplitude of the current or the intensity of the laser, and that causes a vibration on the membrane of the microphone. That is then misinterpreted as speech.

Vamosi: So every time something really cool like this comes along, I often wonder Did they expect to find something? I mean, how does one happen upon an unintended consequence like this?

Fu: Right. Well, I think part of that. The, the one on the light source for microphones in particular, I think you have to give a lot of credit to Takeshi Sugawara from Tokyo. He was one of the collaborators on the project and he had this idea of using lasers to inject things into microphones. And I can't speak for him but I think a lot of it is just about sheer curiosity. I know he was coming from a background of side channels and lasers have been used in the past to induce faults. But I'm not aware of any research before this, that would use a laser to deliberately control the output of the sensor.

Vamosi: And just to be clear, acoustic interference attacks are active, unlike side channel attacks, which are passive.

Fu: Well, hard to say you could consider this a right side channel attack, I suppose, but usually when someone says side channel they're, they're assuming it's about reading information out and this is about putting information in. So it depends. We're on the frontier. We're inventing new things, new, new sub disciplines so its not 100% clear.

Vamosi: This is bleeding-edge research, so much so, there’s little in the way of tools that can be used in the lab.

Bleeding-Edge Testing for Bleeding-Edge Technology.

Find out how ForAllSecure delivers advanced fuzz testing into development pipelines.

Learn More Request Demo

Fu: It is so fundamental. We've even had to build our own laser interferometers in the laboratory to do measurements. The tools are rather blunt. You write a program in MATLAB. There aren't tools you can buy right now so we're. This is classic r&d, and you know I would expect there'll be some tool sets probably in the next five to 10 years but you know in academia, we're always working on the very early stage of the technology.

Vamosi: So how did we get here? How one even start to think about these kinds of attacks?

Fu: Well, I had been working for some time on things involving light. I'm just always curious how sensors transduce the analog into the digital and from my experience in computer security I know most failures happens at the boundaries between abstractions where there's undefined behavior. I felt like was an area ripe for undefined behavior.

Vamosi: Okay. There’s a lot to unpack here. Let’s start with boundaries. The transition from one system to another has always been one of the weakest links in the security chain.

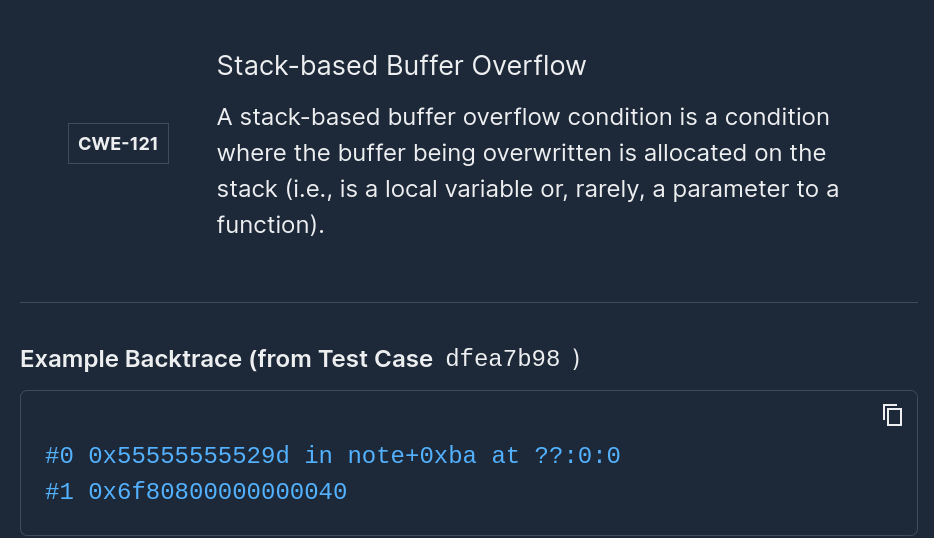

Fu: That's right. So my experience most new classes of vulnerabilities, not just your next buffer overflow but entirely new thinking about entirely new vulnerable surfaces, typically happen at boundaries and, and there's a, there's some really good reasons why this is. It's like where do you go to fish. Well, you know, you look for where there's going to be a good supply.

Vamosi: Boundaries are the classic Go To minefield for discovering new software vulnerabilities. Turns out it’s the same here with micro-electromechanical system sensors.

Fu: The reason why it's interesting as you typically have two different groups of engineers on either side of the interface. And anytime you have an interface, even if it's well defined even if there's a specification. Engineers start to assume things about the other side. And so there's often an application of responsibility for certain things. The classic example would be the buffer overflow. Are you bounds checking whose job is it to do bounds checking Is it the color of the Collie. Well in this case in the analog sensor world. It's all about, well, how do we know that the device is actually sending out actual data of the sensed environment versus some tertiary way that an adversary is able to trick sensor into falsely transducing information.

Vamosi: So think about all the sensors we have today - in our homes, in our cars, and in our cities -- and the many more we’ll have tomorrow. That’s a lot of sensor data, and therefore plenty of opportunity for mischief. But before we get too deep, what are transducers? And how exactly do you transduce information?

Fu: Sure. So, a transducer in layman's terms or engineers layman's terms will take some kind of physical phenomena, something about our world, maybe how light is it in the room, what is the temperature, but it'll take some kind of phenomena, and then create electronic patterns that can be interpreted by a computer to represent that phenomenon. So, again, it might be giving a digital readout on temperature. But perhaps in reality what the sensors doing is looking at the voltage differential between two different metals to try to interpolate what's the temperature in the room. Another example might be acceleration, you'd like to know how fast the car is going. And so you create a sensor that transduces certain physical phenomena into analog or digital values representing your acceleration. One example would be a memory semiconductor that effectively changes its capacitance based upon how it's accelerating through space. But the key take home of all these transducers is that it's a proxy for reality, it's, it's a 99% of the time. Yeah, temperature sensors telling you the temperature, but there are other ways to cause that sensor to transduce different values.

Vamosi: So these MEMS semiconductors, these chips in our phone and in our vehicles, they are just creating proxies for the real world for the machine learning and AI systems. Let’s look at a common sensor, a Light Detection and Ranging sensor also known as LIDAR. It’s a chip based radar that uses light signals, and there are actually several of them embedded within the bumper and side panels or most cars. What LIDAR does is tell an advanced driver-assistance systems or ADAS how close or how far an object is within its field of view. Think pre-collision warning. An attack on this chip has definite consequences on the future of driving, particularly with autonomous vehicles.

Fu: Oh yes in fact, we have a number of papers recently published. Just last last winter actually on LIDAR and autonomous vehicles, how to use lasers to take control of that and what are some of the limits so it's extremely relevant to autonomous vehicles

Vamosi: And, really, we’re talking about any device with a real-world sensor, so this type of attack affects a variety of fields, and a variety of uses.

Fu: It does, medical devices, space, you name it anything kinetic link is a feedback loop where there's a sensor telling a computer to change some kind of cyber physical system. There's going to be a problem.

Vamosi: So if the sensor is a proxy for the real world, a boundary between that and the digital work, and an adversary is actively manipulating the data that device receives, that’s gotta influence Machine Learning and AI as well. You know, garbage in, garbage out.

Fu: It does impact AI in fact you cannot separate this from the AI, machine learning layers. There's a huge amount of interesting work going on. Since, the sensor now doesn't just go to any old computer but the computers doing some kind of machine learning based classification or computer vision. It creates a host of sort of intellectually challenging sort of geometry problems. But at the end of the day it's a very practical problem because cars are ubiquitous, and you want to make sure that an adversary with a laser pointer pen can't simply cause havoc with autonomous vehicle.

Vamosi: Okay, shouldn’t all this be covered in the SDLC, the software development lifecycle, in the design phase, in threat modeling, you know, where developers and engineers first need to articulate all the inadvertent attacks such as these?

Fu: So I think that is part of the problem, lack of a threat model is part of the problem. And there's no bad guy here in terms of the designing and manufacturing, this is just a new area, and it's sort of out of scope, with most professionals, thinking. Nobody would have thought that if you just happen to shake a phone a certain way, you can cause it to have deliberately false accelerometer values.

Vamosi: So, for example, you can use sound waves to hack your FITBIT and reach your step goal through acoustic interference. That’s crazy.

Fu: So, yes, it's part of the threat model and from that you can actually drive some good news. Because the moment you start to have sensible threat models, you can start to have good defenses and so I would say there's quite a lot of opportunity for quick wins here by having reasonable threat models.

Vamosi: In 2018, a group of researchers found that Intentional acoustic interference -- sound -- can produce unusual errors in the mechanics of magnetic hard disk drives. This, they said, could lead to damage in the integrity and the availability in both hardware and software such as file system corruption and operating system reboots. They further found that an adversary without any special purpose equipment can co-opt built-in speakers or nearby emitters to cause persistent errors in computer systems. You would think all this research must be terrifying to the semiconductor industry. It turns out, they’re been pretty great about it.

Fu: Oh, I would say the interactions with the semiconductor manufacturers have been universally positive. I would say, 99% of the conversations end up with. Oh, we never thought of that that's pretty clever. Thanks for bringing this to our attention, what do you think we should do. And I'm really pleased, especially one of the manufacturers I would say as the most progressive Analog Devices. They make all sorts of sensors for automobiles and other applications but when we found a problem in accelerometers across pretty much all the manufacturers they had probably the best response, and that they sent out a document through us cert that explained the physics behind how they recommend their customers defend against these problems. And it was so detailed, it went into some of the trigonometry and the sine waves. And for instance they talked about hey if you put this accelerometer on one of your circuit boards, make sure to cut a trench around it really your holes here in here because that changes the sound waves to make it harder for an emissary to get things in. So that that was um, That was a positive experience.

Vamosi: Given these MEMS sensors, these chips like microphones, accelerometers, and LIDAR are already out in the field, in our devices, embedded in our streets and cities, what are some mitigations?

Fu: Ah, well, there's sort of two approaches short term and long term short term. There are some bolt on defenses that are rather brittle. And they also, I wouldn't say give a very satisfying guarantee, but they act as sort of a band aid. So for instance, it's very application specific so for instance we found some problems in hard drives where you could disable hard drives by sending certain sound waves. And, yeah, it turns out there are certain things you can do with the firmware you can actually do a firmware update to a hard drive to change effectively change some of the differential equations and how it did handled damping up vibrations and yeah you can stop an adversary. From causing your hard drive to stop or get corrupted. But it's very specific to the hard drive, it doesn't solve the problem in general. And so at the end of the day the long term solution is really about first starting off with the threat models. And then what I advocate for is a new way of delivering information from sensors to the microprocessor, and that is having an auxiliary data, or you might even call it a tag, such that the applications and the software stack can verify the trustworthiness of the sensor output before making an action. It'd be great if an autonomous vehicle before it makes a turn or hits the brakes or hits the accelerator could have some measurable confidence that whatever sensor value it got is not maligned and so part of this is going to be about changing the semiconductors to have these additional outputs, as verification checks on the trustworthiness of the sensor output.

Vamosi: So, we know light and sound can affect some sensors, we know the physical material makes some semiconductors more or less vulnerable, and it sounds like there are active mitigations available already. So we’ve mastered acoustic interference, right?

Fu: Let's see, well I think just, it really is still a lot of mystery. There's a lot of questions such as: Well, why does this work? We know how it works. But we've talked with a number of physicists we've talked with the manufacturers and it's a head scratcher. So I think there's still a lot of very interesting science and engineering to do here. Just because it's, it's, it's so rare to find a problem with these kind of fundamental unknowns. And it's, I think driven by just curiosity that we've gotten here, and I think there's a lot more to learn.

Vamosi: So some of this-- for example, why it just works-- is not known, at least not now.

Fu: The closest analogy I can think is brain surgery. There's a lot of things done and brain surgery, you don't know why it works, works. And there's there's just huge gaps in our knowledge. So I, it could be 10 years could be 20 years, who knows. But we are moving forward in the laboratory, designing carefully controlled experiments, the classic null hypotheses, some classic I think a lot of these will make great High School assignments in the future. So it's not necessarily rocket science but if you put all the layers together it becomes complicated rather quickly. Especially when you start asking questions about automated tools, and those tools still have to be created.

Vamosi: At the moment, though, a lot of these are still theoretical attacks.

Fu: So we work in the laboratory. We work on future threats 10, 20 years out, so I'm not aware of any of these in the wild. But I view this as a way to get out in front. These are obvious problems. Once you look at it. And the really hard part is okay how do we defend these things, how do we design these problems out. And so that's where we spend a lot of our mindshare thinking about how, do we change the interfaces, how do we change some of the threat models such that we don't have to worry about these problems.

Vamosi: The good news is that what we’re talking about today isn’t yet in the realm of active threats so there’s plenty of time to work out the mitigations. Meanwhile it’s great material for future Black Hats and Def Cons. And future episodes of The Hacker Mind. But it’s also a great reminder that just because something is built to perform one way doesn’t mean that a hacker won’t come along and maybe get that device to work in another way. That’s what hackers do, they think differently. Like shining a light on a microphone. Or using sound to fake FitBit steps, or disable your hard drive. Don’t say I didn’t warn you.

For the Hacker Mind, I remain your master of all things light and sound Robert Vamosi.

Add Mayhem to Your DevSecOps for Free.

Get a full-featured 30 day free trial.

.jpg)