The Hacker Mind Podcast: Hacking OpenWRT

For three years OpenWRT had a severe validation problem with its download package manager, until a fuzz tester found and reported the vulnerability.

In this episode, Guido Vranken talks about his approach to hacking, about the differences between memory safe and unsafe languages, his use of fuzz testing as a preferred tool, and how he came to discover the validation error in OpenWRT, as well as a serialization error in cereal, and other vulnerabilities.

Listen to EP 11: Hacking OpenWRT

Vamosi: Your router is very important. It’s what stands between you and the internet. The entire internet. So all your home or office devices connect to it, and it then connects to your provider. The same is true from reverse. If I’m coming from the internet, I can be stopped at your router. But I know that in the past some manufacturers have been slow to update their routers. That they bundle a lot of extra stuff. There are stories about open gateways to cloud storage intended as a feature, yet in reality it’s just opening my router to the internet. So what am I going to do?

Enter OpenWRT. As the name suggests, it is open source. I heard about it through a few talks at Black Hat and DEF CON over the years. It’s a firmware replacement designed to allow you to install it instead of the firmware that came with your router. This of course means it has also all sorts of bells and whistles but you get to either turn them on or off. Think of it as a Swiss army knife. I can now configure my router to work specifically with my networks.

This is a story about how everything was going great with OpenWRT except for one thing. The updates. This is a story about the hacker who discovered that while OpenWRT had all the right security measures in place, a developer somewhere at some time, left an extra space in the code that validates the SHA hash value with the update about to be installed. And that single space in the code… opened the possibility that someone could load a malicious executable on your router or other internet connected device under the simple guise of legitimate product update.

In a moment you’ll meet the hacker who found that vulnerability.

Welcome to the Hacker Mind, an original podcast from ForAllSecure. It’s about challenging our expectations about people who hack for a living. I’m Robert Vamosi and this episode I’m talking about someone who consistently finds new and interesting vulnerabilities in some rather unusual places.

Want to Learn More About Zero-Days?

Catch the FASTR series to see a technical proof of concept on our latest zero-day findings. Episode 2 features uncovering memory defects in cereal.

Watch EP 02 See TV Guide

This is a story about Guido Vranken. He is a professional hacker. And he has found some amazing vulnerabilities in some high profile software projects such as cereal and OpenWRT. And he hasn’t been doing it for all that long. So one has to wonder, how does he choose his targets?

Vranken: Depends. I sometimes work for clients and then I have to audit client code. There was also bug bounty so especially software, which has a good bug bounty program is attractive to me because it makes money. And sometimes it's also pure interest or curiosity. For example, I've been working on a fuzzer which does cryptography. I don't get directly paid for that but it’s just a strong desire to find the bugs in some of those applications. So it says it's mix of, like, whether it's optimal or if it's interesting to me. So it depends.

Vamosi: Fuzzing, in case you didn’t already know, the process of feeding invalid inputs into a software and monitoring it’s behavior. Does it crash? Does it do something unexpected and could that possibility lead to a vulnerability that can be exploited? Because fuzzing is so powerful and comprehensive, this lead Guido to consider fuzzing as his tool of choice.

Vranken: It’s funny, actually. Before I found out about fuzzing, I was doing manual code review and I was just reading the code for hours on end. And I heard about fuzzing but I just thought it can’t be that good. My mind is much better. So it actually took a while before I accepted it, before I tried fuzzing. And when I tried it, I realized that it has very strong potential. So, and these days I still write some code, of course, but fuzzing is the most important part because it's fairly effective. The appeal of fuzzing is it is kind of a reward system. For example, if you find a bug then you get another update and you get a feeling, yes, I found a bug, and a again another bug, and another bug, and it's very rewarding. That's. So that's, that's one of the appeals of fuzzing to me and it's just a very effective tool to do the job.

Vamosi: Is fuzzing a commonplace tool or more of a niche tool

Vranken: Well the various types of vulnerability homeless stuff, like I said, a lot of people who focus exclusively on that form abilities who might not be exposed to foster that much but I get the sense that in the domain of people who are involved with C code and c++ code and Go and Rust, fuzzing is increasingly popular with faces because it's very effective so I get the sense that it's, like, becoming ubiquitous, and it's essential essential tool for for checking the safety of course.

Vamosi: Guido doesn’t just use fuzzing. He of course also uses a wide range of tools.

Vranken: Well I use VIM as an editor so it's a program able editor and so it's very useful if you have to change a lot of things. In the same way, then it's, you can do it so with one command. So I use a lot. I use C-Tex is just, you can extract the function names and stuff coming from a source source code and then find it easily. Let's see what else what other what else I use, I use clang as a compiler because it has a fun future features for fuzzing like build them fast support as a sanitizer on the Find a sanitizer. And let's see what else do I use. I don't know dude, those are basically my business given to us sometimes I ride bicycles to do stuff. So biting is something I use a lot. Sometimes I need to do, calorie calculations I usually do that in Python, so that what i what i want to know, and the Python capital and then I get the answer. So, that goes back to the tools I use, I usually don't have to reverse engineer code. So I don't use tools like IDA Pro and stuff like that all people use who are into malware, reverse engineering. I've done such things in the past but these days I focus mostly on fuzzing and finding bugs. So those tools I mentioned are mostly the ones I use.

Vamosi: There are the tools that every hacker uses, and there’s something more. Guido has describes it as creativity. It’s how he looks at a project.

Vranken: Well, I think, how we usually work is when you have a client or you have to go to someone or someone, then they give me the code and the objective is to find bugs and the way I do that is up to me so I get to the creativity to do it any way I want, and sometimes. Sometimes it's very straightforward you just find a fuzzing harness and it's very straightforward but sometimes there are more complex challenges for example with serial fossils I vote ForAllSecure, that was a lot of novel stuff like comparing whether things serialized into the exact same input. So, it requires thinking today about how from our program can break and. And then, writing a harness that plays into this.

Vamosi: What’s a fuzzing harness? In fuzzing you always need an input. Sometimes the software provides that. Sometimes you want to fuzz an aspect of a protocol or a whole library that doesn’t necessarily have obvious inputs, so you need to create what’s called a harness, a way of focusing the fuzzer.

Vranken: Okay, so when you have a program that you want to test for a box like for example open SSL is fairly well known. It's an as a library then what you do Su, create an entry point into that library so the library has all kinds of functions for example, it has a function of, like, connecting to a server and then you need to create an entry point between the fossils and the library. So you need. It's like a patch between the files from the library, and from the harness which is a. c. c file and you need to call the library from that. But with certain data. So it's, it can be very simple a harness can be just 10 lines of something, it can also be incredibly complex for example I've been working on a puzzle which which is now 32,000 lines is 132,000 lines so you can make it as complex as you want and you can keep adding a new test, and then new features and so on so it's basically either that dynamic test suit. I guess we could call it that. Sure.

Vamosi: Cool. So we have target. We have some tools. And we have Guido’s experience and creativity. But how does he start looking for vulnerabilities?

Vranken: Um, well, I don't want to target, I compile it to see if that's one type of low hanging fruit is just hard to test today and see if there any any things pop up. Sometimes you can, or you can vote on the test suite that's alkaline so normally bugs can detect which otherwise don't get attacked, that's like a type of low hanging fruit and you can easily easily catch up. Then I have some regular expressions to to look to the source code for, like, sub certain certain things like qH which shouldn't be done like undefined behavior and stuff like like integer overflowing and soft so I look at the source code that is one way to go about it and of course I write a fuzzing harness, I try to divide, an application into multiple paths and then for each path, there was a bit of puzzle. And that's how I usually do it.

Vamosi: Like a lot of hackers, Guido gained experience participating in bug bounties. He started with web-based hacks, looking for cross-site scripting errors and such that can find on websites. Eventually, though, he drifted into applications, source code, and fuzzing.

Vranken: Yeah, well, actually. Some years ago when I started doing bug bounties I also was active in web web based form abilities like cross site scripting, and so forth. and I had fun doing that but eventually I realized that my jam is fuzzing in c++ code and other source codes and not so much that, so I, at some point I just stopped doing the web entirely and I'm now exclusively focused on on source code auditing.

Vamosi: One more thing about Fuzzing, it not only identifies crashes, but also anomalies. That’s how Heartbleed was found - after sending invalid data to OpenSSL, the return on the heartbeat function was anomalous, so that required more investigation. Not exactly binary - it works, or it crashes. And there’s where the more interesting vulnerabilities lie. That’s one reason why Guido uses fuzz tools, they can expose more of the anomalous behavior.

Vranken: I think fuzzing is associated with memory unsafe codes like C and c++ but like I said with the example of Cereal and openWRT we have to eliminate many other examples that it's also very effective for memory safe languages like Go and Rust and even JavaScript, our JavaScript fuzzers now and fuzzers you can do any language. So, and even if a bug is not a security bug, then it can still be very important to fix and find.

Vamosi: So what do we mean when we talk about memory unsafe languages like C and C++?

Vranken: C and c++ and some other languages you can manipulate the memory directly and this has the advantage of being very fast, these languages are usually very fast compared to say JavaScript or Python. These are relatively slow, but downside is that you can accidentally crash in memory, and then your program crashes in the best case, the worst case, it can lead to actual exploits like the one I talked about found by Google lab or tech civil where they can just hack your iPhone without you even knowing so that's downside of memory on safety and.

Vamosi: At the time I was preparing this episode, Google’s Project Zero disclosed a significant vulnerability in Apple’s iPhone and other connected products. Google’s Ian Beer, who first reported this vulnerability to Apple in November 2019, published a detailed technical account of how he found and developed an exploit. In brief, the vulnerability allowed anyone exploiting it to instantly take over someone’s device without touching it at al. Beer likened the vulnerability to a magic spell that allowed anyone to gain remote control. Basically iPhones, iPads, Macs and Watches use a protocol called Apple Wireless Direct Link (AWDL) to create mesh networks. These local or mesh networks are used for features like AirDrop so you can easily beam photos and files to other iOS devices and Sidecar so you can quickly turn an iPad into a secondary screen. Not only did Beer figure out a way to exploit that, he also found a way to force AWDL to turn on even if the user had left it turned off. Fortunately, Apple patched part of it early in 2020, and then the rest later in the year. What’s relevant to this conversation is that Beer also released proof-of-concept exploit code, describing the vulnerability as "a fairly trivial buffer overflow programming error in C++ code in the kernel parsing untrusted data, exposed to remote attackers." So, as Guido said, memory unsafe languages like C++ allow for memory manipulation, and sometimes those manipulations can lead to pretty severe exploits.

Vranken: Memory unsafe times is also very popular, especially in embedded environments like open Wi Fi and, like, like I'm betting that balance, violence, also c++ is still very, very widely used in the game engines and all the things you have to currency so those stagnant, things will still be around for a long while, and we are not yet at the point that we will be using exclusively memory safe languages.

Vamosi: Before he found OpenWRT, Guido found another high-profile vulnerability, this one in Cereal. Cereal is a very light-weight, highly used, general-purpose serialization library written in C++. With cereal, arbitrary data types can be turned into different representations, such as compact binary encodings, XML, or JSON. This has to be a one-to-one match so it can’t be that I send 1000 dollars to a bank, but the bank only records 100 dollars. That wouldn’t work. Yet that was what happened in Cereal before it was patched.

Vranken: There are, in fact, a lot of types of form abilities as well, like serialization bugs. I think I explained in the blog blog post material that if you jail serial is a JSON encoder an XML encoder. So if you encode like a monetary value like thousand dollars and you encode it into cereal. And you can decode the serialized and it comes out as hundred dollars that would be a bug because if you send $1,000 to the bank and the bank thinks he wants to send $100 that that's an issue. So I focus heavily on better on verifying better, whatever you put input into cereal comes out exactly the same day so I spent quite a lot of time on that. So, I call it a serialization symmetry, and that's actually a bug, if that exists, that could lead to severe problems like I mentioned, if you have to send monetary amounts of or whatever. If the data you want to send the cost doesn't exactly people using the exact same day does. Does, does an issue so I focused on the memory bug but also on the serialization symmetry, and then all of things as well. came along like the use of undefined. None of the the crashes, Hanks and so forth. So yeah, those are the things I focused on.

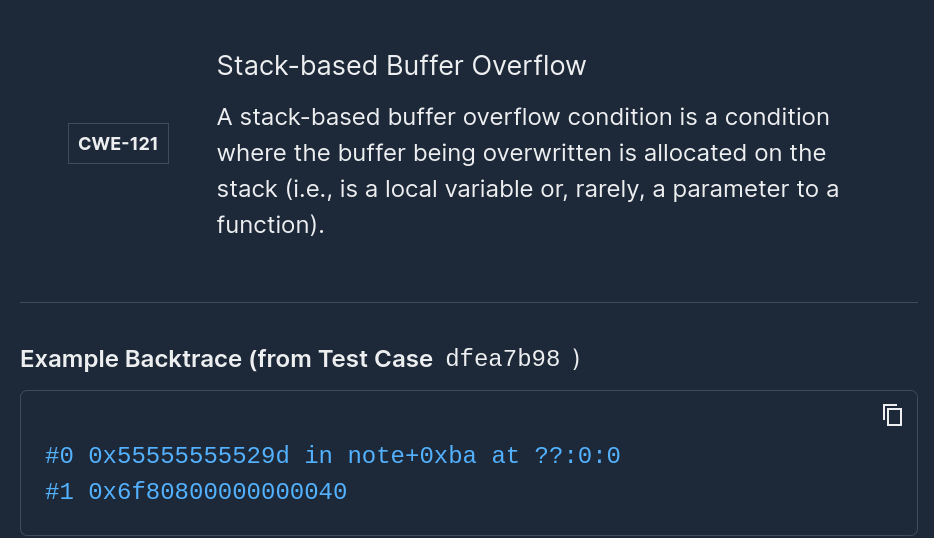

Vamosi: Okay, let’s talk about CVE 2020-7982; it affects OpenWRT. Armed with his various tools, earlier this year Guido turned his attention to OpenWRT, but he had no idea whether he’d find anything. He started with the update package manager, opkg.

Vranken: I think I wanted to fuzz the package manager. And in the process of that I found out that it was nothing, nothing, for example, to fast the package manager, I had to disable the code that compares the hash to the to the to the to the binary but actually I accidentally found out about this because it was learning to code that I wasn't expected to because I expected that it was checking against hash. So actually, actually this was an accidental discovery.

Vamosi: Since OpenWRT doesn’t include SSL, opkg includes two files: one is a list of the SHA256 hash values and the other is the update package itself. Whenever the update package is run, it’s resulting hash should match the value on the list. However, Guido found there was a leading space in the validation process, and that triggered some interesting characteristics. For example, with that space opkg can believe the SHA256 hash is blank. And having a blank is very different than having a value that could be wrong, which would prevent the package from installing. In this case, rather than fail to install, OpenWRT simply skipped the hash validation process all together. And that’s much more dangerous.

Vranken: Yes, the process of updating could have resulted in code execution.

If it worked as intended, then it would be very secure but due to, like, like a bug in the code, they work better actually verifying the SHA hash against the package so you could bypass an entirely so if a malicious actor was acting as a man in the middle on your connection. Then he could put some malicious code and it wasn't actually verified against the, the hash, so that a malicious act of goods could execute arbitrary code on your computer.

Vamosi: He’s talking about a person-in-the-middle attack, where a bad actor, if on the network at the same time, could inject his or her own malicious code. One might argue this is a edge-case, a rare circumstance since the updates are not automatic, they must be user initiated. But I would also argue that a vulnerability is a vulnerability even if it is not easy to exploit. It was also a different kind of vulnerability.

Vranken: Actually this is interesting because a lot of focus on security details is about memory bug but this wasn't actually a memory bug. This was just a small logic bug and the open WRT course where the has wasn't compared to the binary. And this wasn't a memory bug. It wasn't corrupting the memory of anything that it wasn't like Heartbleed. It was actually, I think, a single space that was added where it shouldn’t have been and that goes to a Rube Goldberg-machine kind of verifying the code wasn't executing as intended. So, it's an interesting example of how non-memory safety bugs can also have severe consequences.

Vamosi: And so Guido’s sitting there with his discovery. The adrenaline is rushing. He found something BIG. While the temptation is to go public, the reality is you should always inform the vendors. This is responsible disclosure 101. Generally it is good procedure to give the vendor at least 90 days heads up. In that time, the vendor can verify the vulnerability and address it with a workaround or patch. In an ideal world. In reality, some vendors don’t think about the next step beyond releasing the code. Some don’t even have addresses in which to contact them. But, with open source, there’s usually a way to contact the owner or with the project maintainer.

Vranken: Yeah, so usually to do this is you contact the maintainer-- usually someone who is actually actively working on a program. So you send them an private email. In this case I reached out to the maintainer but I think he was pretty busy and he wasn't super responsive to it so it didn't get fixed. So,I think, eventually I went with going with public disclosure, which means that you just published the bug on the GitHub issue tracker. But, for example I worked with OpenWRT. I sent an email to the security address at OpenWRT; they acknowledged it and gave the feature a look. He acknowledged that they were going to fix so then I verified that the fix worked and then set a date for public disclosure and then at that date, they sent out an advisory, and everyone who was using openWRT was prompted to update.

Vamosi: When Guido went public in March 2020, OpenWRT had already been patched and the community was strongly encouraged to update it immediately. Guido has also researched cryptocurrencies, like Etherium.

Fuzz Testing is a Proven Technique for Uncovering Zero-Days.

See other zero-days Mayhem, a ForAllSecure fuzz testing technology, has found.

Learn More Request Demo

Vranken: So, one interesting thing with cryptocurrencies is that it is this distributed system and all the nodes all the people who are running their client of that client, they have to respond to the, to the network data in exactly the same way, for example, they all verify the transaction that comes through. But if, for example, if one client does one thing and the other client does another thing then you get a change splits such that two groups of clients start doing a different thing. So that is very important that all their clients do exactly the thing that is prescribed in the protocol, and I got it from Etherium. While and they have a very complex code that that's it's got it's actually a virtual machine that runs for each transaction and if there was a difference between one client and the other, then this can lead to change splits and I actually found a couple of those bugs. It's a complete VM and they have two implementations and Go, Rust, and c++, and they have to do exactly the same. If they don't do exactly the same, then you can change this, which is an issue because it weakens this type of thing as well.

Vamosi: You might be wondering if Guido goes in with a plan. If he thinks there is a bug and then goes about proving it. He does. In general, in the vulnerability world, are there deliberate proofs than accidents.

Vranken: Yeah, it's more common to set out to prove something and then find a bug or not. Accidental discoveries happen occasionally but not that often.

Vamosi: And for those of you that are thinking that Guido has been steadily working behind the scenes before he went public with any of his high-profile vulnerabilities--he’s relatively new at computer programming and vulnerabilities research.

Vranken: I know it's actually about, well, I dropped out of school when I was like 14 or 15. And then, like, until I was 30, I didn't work. I did volunteer but I didn't work. I actually picked up programming when I was about 30, and then I started doing bug bounties again.

Vamosi: So it sounds like we’ll continue to have vulnerabilities in ubiquitous memory unsafe languages like C and C++, and that will keep fuzz testers like Guido very busy. At least for now.

Add Mayhem to Your DevSecOps for Free.

Get a full-featured 30 day free trial.

.jpg)