CVE-2022-35922: Network Applications with Some Mayhem

Most out-of-the-box fuzzing examples and tutorials walk you through fuzzing a program that accepts input over STDIN, or a file. But what if your application doesn't take input that way, is it still fuzzable? In this post, I will explain the steps I took to fuzz an inherently network-centric target with Mayhem.

The Target

I harnessed the websocket crate, introducing fuzz testing to the almost 8 years old codebase. This crate predates Rust 1.0 by 6 months! So what exactly are websockets?

The WebSocket Protocol enables two-way communication between a client ... to a remote host ... The protocol consists of an opening handshake followed by basic message framing, layered over TCP. -- RFC 6455

I was particularly interested in testing the error handling and robustness of clients and servers using this crate throughout that handshake and message unpacking.

The Harness

Using the excellent cargo-fuzz tool for templating and running local fuzz campaigns, I created a rudimentary harness for a server accepting a connection:

Full source code for the harnesses can be found here.

The basic idea here is that we wrap the fuzzer provided input into a FuzzInput which mocks a TCP connection. While Mayhem supports fuzzing over actual network IO, better performance and analysis modes are possible when fuzz input is kept in memory.

The .use_protocol method informs our websocket listener to accept a "rust-websocket" value in the Sec-WebSocket-Protocol header. The actual value specified is not very important, except for when building up a corpus which we will get to later.

After .accept()ing the handshake, the harness will then attemt to read a message, which includes handling multiple dataframes.

The actual outcome of these operations is not to be relied upon (since we are about to send it malformed inputs of course!), so any functions that report an error will simply return early. We are hoping that this error handling is sufficient to keep our simulated websocket server running even when faced with terribly formed, or even well-crafted inputs. If the harness panics, crashes, or gets killed by the OS, we will investigate the root cause and determine if our harness code is to blame, or if there is a flaw in the crate.

A harness allowing us to fuzz client code was also written:

Performance of the First Harness

As we can see from the coverage graph, progress flatlines around 60 testcases that have unique coverage:

Since coverage seemed low, I checked what the generated testcases roughly looked like:

I also set a static key in the example client and the client fuzz harness. This ensures that the recorded handshake response from the server will be correct when we later present it to the client during fuzzing.

Data sent from the client is saved to the client folder, and data from the server to the server folder. This means that files in the client folder should be presented as example inputs to the server fuzz harness, and vice versa. Though in reality, it wouldn't hurt to just add every session as testcases to both harnesses, because of the corpus minimization step.

With those example interactions added to the testcases, we have much better coverage for both harnesses! Here's the coverage graph for the server harness:

Triaging Crashes

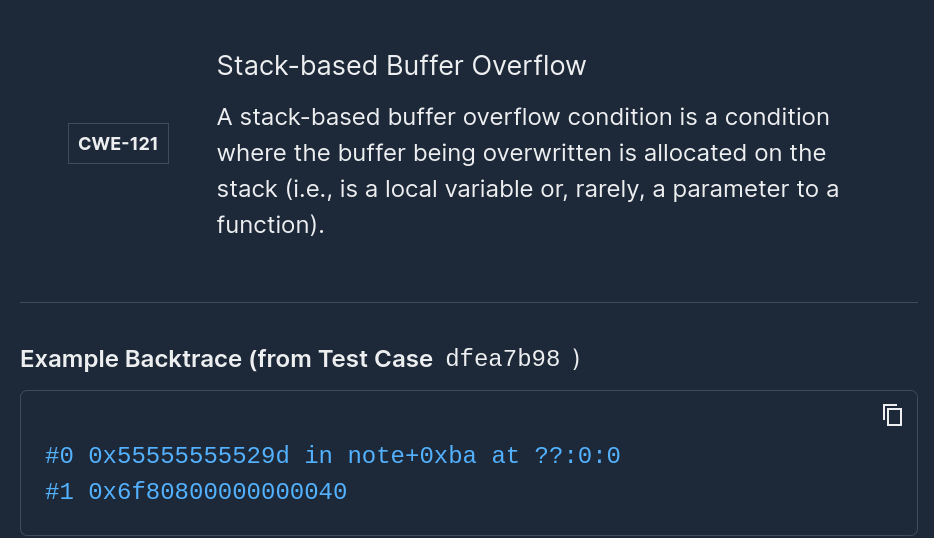

Those two red dots on the coverage graph show detected defects, so I then opened up the crash interface. Mayhem's triage analysis was spot on, and identified the issue as CWE-789: Uncontrolled Memory Allocation, and provided a backtrace.

The root cause in the backtrace was this call to Vec::with_capacity. Pre-allocation is a good strategy to increase performance, but the input should be guarded if an external source can influence or directly provide the input value. header.len is deserialized from the network and can report a length up to u64::MAX, so it definitely should be checked before allocating.

The other defect was the same root cause, but was triggered by a different allocation size. This target was compiled with libfuzzer and ASAN, both of which have allocation size checks, only at different thresholds. A nice thing about working with Mayhem is that it is generally good at grouping crashes, but in this case it had to show one crash that was detected by libfuzzer, and another crash that was detected by ASAN.

The Fix

The fix is present in this commit which checks a threshold before pre-allocating. This is the best mitigation for CWE-789, and the effectiveness will depend on how closely the allocation threshold matches the application-specific usage.

As a side note, Rust's support for fallible allocation here would not be a suitable fix. This is because a crafty attacker can still cause allocation failures by dynamically figuring out what the remote system is capable of allocating by seeing if the connection is dropped or not. Then (perhaps using multiple connections) the server process's allocator will be forced to the brink of running out of memory, until even small allocations would jeopardize aborting the process.

Thanks

I sincerely thank the websockets maintenance team for responding and releasing a fix so quickly. They suggest tungstenite for new websocket applications in Rust, however they clearly value the security of users that haven't switched crates yet.

Links to Advisories

- GitHub Security Advisory

- RUSTSEC

- cargo-audit can report if you are vulnerable to this issue

Try Mayhem yourself on websocket!

Create a free mayhem account, fork the GitHub repo, build the code, and run Mayhem as part of your CI/CD builds.

Add Mayhem to Your DevSecOps for Free.

Get a full-featured 30 day free trial.

.jpg)