Challenging ROI Myths Of Static Application Security Testing (SAST)

There are several benefits for using Static Analysis Security Testing (SAST) for your software security. Having previously worked at Coverity (now Synopsys), I’m intimately familiar with the arguments in favor of using SAST. While there have been a lot of successes (such as adoption in the OSS community through Coverity SCAN), I’ve also seen challenges with organizations attempting to adopt SAST as part of their development process. Increasingly, a number of customers I’ve had discussions with are looking for SAST alternatives. Why? In theory, the ability to analyze source code and infer potential defects using SAST in the build process seems like a real step forward in improving the quality of software. However, I can think of at least six challenges to this form of analysis. This raises questions on the efficacy of SAST for organizations focused on immediate benefits.

SAST does not use the actual executable/binary for analysis; it typically uses a representation of your program. This technique, no matter how good the analysis, will always result in false positives (FPs). And it will find defects in paths that the program would never actually implement in a live system.

Why is this important? For programs that are trivial in size, FPs may be manageable, but what happens when you have code bases that are 10MLoC or more? Some of the industry’s best SAST checkers are designed to have FP rates below 5%, but if we use a common metric of 15-50 errors per 1KLoC as posited in Steve McConnell’s Code Complete, the number of potential defects identified by SAST on that 10MLoC is approximately 150k-500k defects!

Of these defects, we can typically expect approximately 7.5k - 25k to be FPs (and that’s if your SAST tool is good).

Six Problems

This leads to a few problems:

- Effort: How much effort is required to look at all the defects? When we start looking at the number of defects identified by SAST, it becomes clear that identification is only a small part of the problem. There exists a very substantial development effort to first triage/classify the defects in terms of severity, followed by developer effort (which also requires contextual expertise) to remediate the defect. This suggests that addressing SAST defects requires potentially a large amount of developer time, a large amount of developers, or both.

- Focus: How much developer time needs to be focused on the curation and remediation of defects? This detracts from other work a developer can perform. This impact to developer productivity must be factored into the cost of adopting SAST.

- Waste: How much of this developer effort will eventually be wasted due to FPs with no measurable improvement in the security of an application?

- Trust: How much of a psychological effect will FPs have on developers? Over time, they will find it difficult to trust the results produced by SAST. While real defects may indeed be found, FPs are associated with wasted effort. This stems from a fundamental limitation of SAST - it can identify the defect, but provides only partial context regarding how a defect may potentially be triggered in the code. From a developer’s perspective, having a reproducer or test case which can be used to both verify the existence of the defect as well as the absence of the behavior when a fix is applied is never provided as part of SAST. Instead, a developer must reason about the defect and its impact abstractly.

- Priority: How do you prioritize what to fix with what is identified with SAST? This is difficult to know unless you have the expertise to understand the implications of the identified defect (even if you’ve been given an associated CWE). Why? Because defect identification does not provide a test case for reproducibility. This is the litmus test most developers require to consider defects actionable. Being able to identify the line of code where a failure occurs and having an example of a test which reproduces that failure is the gold standard for actionability.

- Timeline: How long will it take to fix these defects? Given the scale of the defects uncovered via SAST, adoption is an organizational proposition and takes time to implement successfully. This may require multi-year investments before improvements are seen in the quality of the shipped software.

Given these six problems, it begs the question - does SAST effectively improve security given the rapid pace of software evolution? There are many organizations that adopt SAST simply to claim that some assurance/quality tools were used as part of their development process, especially if it means externally imposed compliance criteria. Compliance however is not security.

While there are defects that SAST excels at uncovering (think linting/configuration checks that can be performed to prevent insecure use/behavior of some functionality), SAST's problems limit its effectiveness in today's rapid mode of software development, where we’re seeing an exponential increase in source code.

The ability to analyze ever larger codebases exceeds human scale. Another approach is required.

Download: The Buyer's Guide to Application Security Testing

Get a detailed breakdown of the various Application Security Testing techniques, the strengths and weaknesses of each technique, and how each technique complements one another.

Download the Whitepaper More Resources

Enter Fuzzing

Unlike SAST which does not use the actual executable/binary for analysis, fuzzing always tests running code. Modern fuzzers autonomously generate inputs and send them to target applications for behavior verification. When target applications behave unexpectedly, this is a sign of an underlying defect. This method is effective for defect discovery because it ensures defects are not theoretical weaknesses but actual, reproducible behaviors with real consequences.

Developers benefit from fuzzing in a number of ways:

- A crashing input can be used as a reproducer for both verifying that the unexpected behavior exists as well as for validating any potential fix. This can be added to existing quality assurance tests/processes to ensure that behavioral regressions are not introduced in future versions of the software.

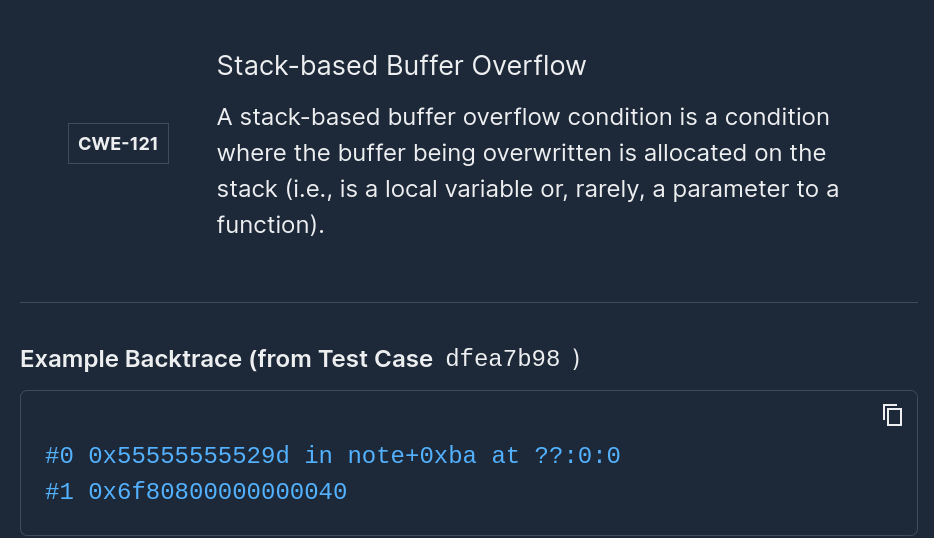

- Additional diagnostic context can be provided as guidance for remediation. Fuzzing can identify the line of code, the associated callgraph to the offending function, as well as additional register/memory states to aid in diagnosis.

- The type of defect can be associated with a CWE which further aids in the prioritization/triage of a defect’s impact on the software.

- Additional analysis can be performed to further determine side-effects associated with a defect (such as operations which potentially manipulate memory in unsafe ways). This is additional context for determining the severity of a defect.

- Fuzzing as a technique is increasingly used by vulnerability researchers for finding vectors of attack.

Putting all these together-- the test case, reproducer, context, and efficacy -- modern fuzzers provide developers with everything needed to remediate an identified defect. Issues identified with fuzzing are guaranteed to be actionable.

Another clear benefit of fuzzing is that testing can now happen at machine scale. Back when unit testing was introduced to the SDLC, it fundamentally changed how software was developed. Fuzzing is the next evolution. This time, however, with machines generating test cases instead of developers reasoning about all the possible edge cases within code. Rather than wasting developer time to handcraft unit tests, developers can instead create test harnesses that automatically provide bias-free inputs. These inputs discover unexpected conditions that lead to crashes. The use of fuzzing can reduce significant costs associated with maintaining tests. Advanced fuzzers dynamically generate test suites with minimal investment of developer time.

In addition, the accumulation of dynamically generated tests provide a suite of inputs that can be incorporated as part of regression testing applications prior to deployment. This makes fuzzing something that is easy to incorporate within the modern SecDevOps workflow.

Given all these benefits, a fuzzing-first strategy for most software development offers immediate benefits to the software’s security posture.

Fuzz your software before someone else fuzzes it for you. Want to learn more? Contact us for a demo here.

Add Mayhem to Your DevSecOps for Free.

Get a full-featured 30 day free trial.

.jpg)