Applying Cyber Grand Challenge Technology To Real Software

I first heard about Mayhem when I read that researchers at my university, Carnegie Mellon, had reported 1200 crashes in Debian, just by running their binary analysis system on Debian programs for 15 minutes at a time. When I learned that the technology developed by those researchers was spun out as a startup, ForAllSecure, I knew I had to get involved.

My joining ForAllSecure coincided with the company’s preparation for the DARPA Cyber Grand Challenge, the world’s first automated hacking competition. We spent thousands of hours extending the Mayhem system over two years to prepare for the competition, with significant focus on improving its core, the symbolic executor. Symbolic execution is the process of taking a program and generating test cases by tracking how inputs are used and mathematically solving for new inputs based on a deep understanding of what the code is doing with the input. Contrast this with fuzzing, which combines high-level feedback with high-speed testing to find new inputs.

After CGC's very public demonstration of the possibilities for automated software analysis, we started receiving many inquiries from a wide range of companies wanting to know how this would work for their applications. Most of these projects involve software we cannot discuss publicly, so we also decided to continue applying our technology to open source programs that we could share the results from. Some of our engineers had done a few small-scale experiments on well-known and well-fuzzed binaries, and found new bugs: OpenSSL (CVE-2016-7053) and sthttpd (CVE-2017-10671). While I think these are good demonstrations of what Mayhem can do for real-world software, I was itching to try additional experiments that would look at a single piece of software more in-depth.

After reviewing several open source projects, I settled on testing objdump. This program is a normal part of a developer or security researcher’s workflow, providing insight into the contents of a binary program, for example, which functions and assembly instructions it contains. At its core is libbfd, which has been fuzzed significantly in the recent past. Looking at the history of reports, it was ripe for additional fuzzing enhanced by symbolic execution. Many bugs had been reported and fixed, but reports had tailed off significantly in recent months. This suggests that most of the bugs visible to existing fuzzing tools were already found and patched. If any more bugs were to be discovered by Mayhem, this would be a great indicator that Mayhem can find things other tools cannot.

Results

Our testing found over 10 bugs in about 60 total hours on my workstation with an 8-thread CPU. I triaged and reported the bugs each morning, then merged fixes and restarted the testing process over the course of a few days. A single bug can manifest in multiple ways, so by only reporting 1-2 bugs per binary format in each report, additional crash reports were clearly the result of other distinct bugs. Libbfd’s excellent maintainer was able to supply patches that fixed not only specific bugs but entire bug patterns. Triggering multiple crashes because the same buggy pattern appears in multiple places is not really compelling and inflates the bug count, so I wanted to be as fair as possible about the number of bugs.

bfd_cache_closeHeap UaFMemory CorruptionCVE-2017-12448alpha_vms_object_pHeap OOB WMemory CorruptionCVE-2017-12450bfd_mach_o_read_symtab_strtabHeap OOB WMemory CorruptionCVE-2017-12459_bfd_vms_slurp_egsdArbitrary ReadMemory DisclosureCVE-2017-12454bfd_mach_o_i386_canonicalize_one_relocHeap OOB RMemory DisclosureCVE-2017-12452read_symbol_stabs_debugging_infoHeap OOB RMemory DisclosureCVE-2017-12456_bfd_vms_slurp_eeomHeap OOB RMemory DisclosureCVE-2017-12453_bfd_vms_save_sized_stringHeap OOB RMemory DisclosureCVE-2017-12449evax_bfd_print_emhHeap OOB RMemory DisclosureCVE-2017-12455nlm_swap_auxiliary_headers_inHeap OOB RMemory DisclosureCVE-2017-12458_bfd_xcoff_read_ar_hdrStack OOB RMemory DisclosureCVE-2017-12451bfd_make_section_with_flagsNull DerefCrashCVE-2017-12457

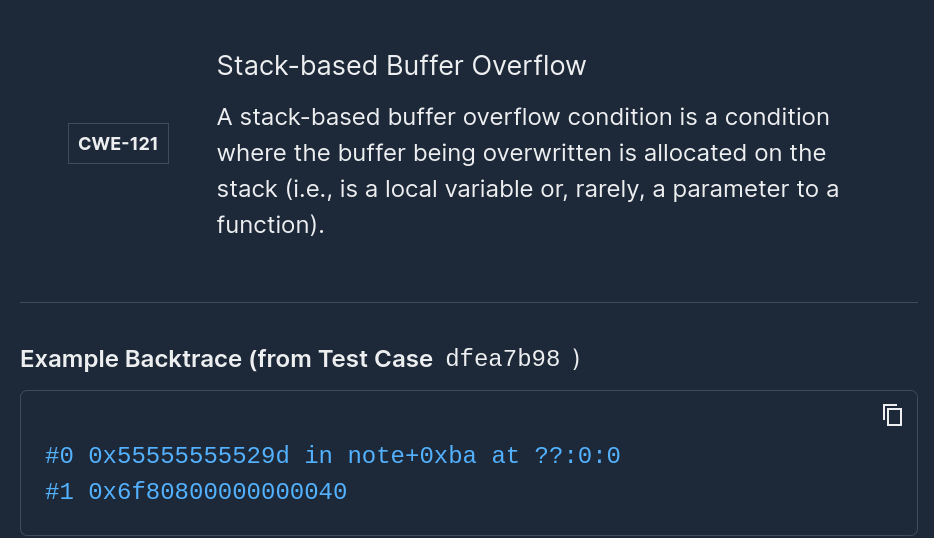

In this chart, the red entries represent bugs where memory corruption occurred. This means they are potentially exploitable to run an attacker’s code. The orange and yellow entries represent memory disclosure, which means information in the program’s memory may be visible to an attacker. Finally, the green entry can simply crash the program.

Why were we able to find so many overlooked bugs? These are not recent regressions, and coverage guided fuzzing has been applied to the same code. In the VMS module, we found a bug almost immediately that had been somehow missed by others who reported bugs in that same area of code only a few weeks ago.

At ForAllSecure, we believe it comes from a confluence of fuzzing and symbolic execution. Symbolic execution shines the most when several simultaneous constraints must hold on an input. Although symbolic execution is significantly slower per iteration than fuzzing, each and every synthesized seed is guaranteed to take a unique path, unlike fuzzing where mutated tests are generated very quickly, but are often duplicated or meaningless.

During CGC, we and other teams found that a combination of testing strategies is more effective than doing either alone. The relationship between practitioners of the two approaches has been a little rocky, with skepticism on both sides - either fuzzing is effective but shallow, or symbolic execution is heavyweight but by itself not always scalable for real world software. Both sides really want the same result, which is to find high impact bugs.

I suspect the two approaches will ultimately converge, as this experiment reflects only the superficial effect of merging corpora between guided fuzzing and symbolic execution; there is a great deal of room for improvement and we’re excited to continue pioneering this approach in our Mayhem product.

Interested in trying out Mayhem yourself on your software? Contact us about starting a pilot here.

Add Mayhem to Your DevSecOps for Free.

Get a full-featured 30 day free trial.

.jpg)